Organizing Committee

Ohio State University

Brown University

Carnegie Mellon University

University of California, Irvine

Yale University

Abstract

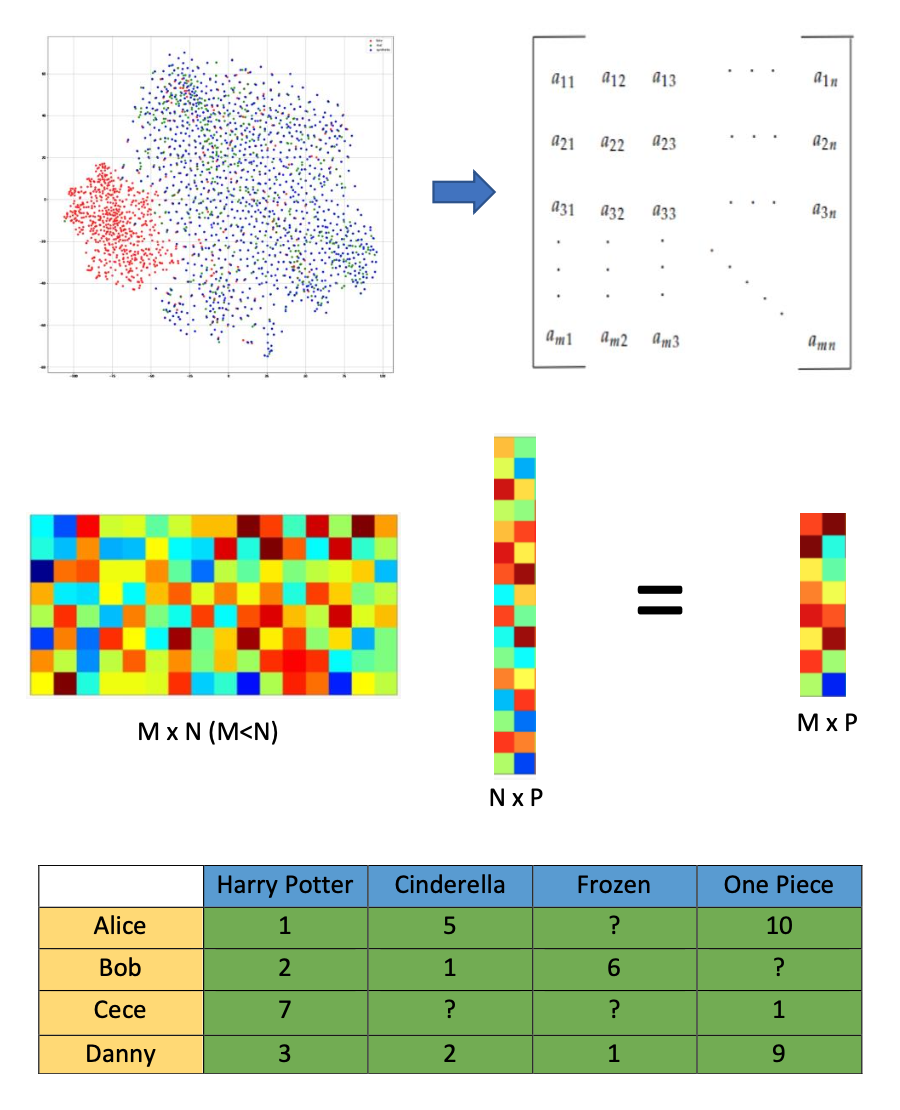

Playing a significant role in modern data science, random matrices provide an elegant way to represent both (a) the data and (b) the way we process it. To give an example of (a), the classical model of high-dimensional data is a set of points drawn from a certain distribution in a high-dimensional space which can be represented as a random matrix. An even more natural example is that most data in practice is noisy, so it can be represented as a deterministic matrix plus a random noise, which is a random matrix with a non-zero mean. An example of (b) is data compression, which can be realized by applying a random matrix of smaller sizes to the data in (a), thereby reducing its dimensions. Another example is data completion, where we need to reconstruct data (in the form of a matrix) from a random sub-matrix, given that the original matrix satisfies certain structural constraints (such as being low rank).

To deal with these problems, we need to develop techniques beyond the scope of standard random matrix theory. For example, while the sensitivity of matrix spectrum to small random perturbations is extensively studied in the mean field regime, the problem in the setting of structured random matrices, which allows for more adequate modeling of real-world data, is wide open. As another example, the core mechanism of an artificial neural network is a composition of linear and nonlinear transformations, leading to nonlinear generalizations of random matrices. Our understanding of mathematical principles behind examples like this is still in its infancy.

The goal is to discuss a number of hot topics in these areas, focusing on recent theoretical progress and the potential pool of applications, in a manner that is accessible to a wide audience, including researchers in data science, electrical engineering, statistics, and numerical analysis.

Loading participant list in background...

Workshop Schedule

Monday, May 20, 2024

8:50 - 9:00 AM EDT

Welcome

9:00 - 9:15 AM EDT

Opening Remarks

9:15 - 10:15 AM EDT

Matrix invertibility and applications.

10:15 - 10:45 AM EDT

Coffee Break

10:45 - 11:25 AM EDT

The limiting spectral law of sparse random matrices

11:35 AM - 12:15 PM EDT

Improved very sparse matrix completion via an "asymmetric randomized SVD"

12:25 - 12:30 PM EDT

Group Photo (Immediately After Talk)

12:30 - 2:00 PM EDT

Lunch/Free Time

2:00 - 2:40 PM EDT

Universality of Spectral Independence with Applications to Fast Mixing in Spin Glasses

2:50 - 3:30 PM EDT

Extreme eigenvalues of random Laplacian matrices

3:40 - 4:10 PM EDT

Coffee Break

4:10 - 4:50 PM EDT

Analysis of singular subspaces under random perturbations

5:00 - 6:30 PM EDT

Reception

Tuesday, May 21, 2024

9:00 - 10:00 AM EDT

A new look at some basic perturbation bounds

10:00 - 10:30 AM EDT

Coffee Break

10:30 - 11:10 AM EDT

Improved Principal Component Analysis for Sample Covariance Matrix

11:20 AM - 12:00 PM EDT

On a Conjecture of Spielman–Teng

12:15 - 2:00 PM EDT

Lunch/Free Time

2:00 - 2:40 PM EDT

Matrix superconcentration inequalities

2:50 - 3:30 PM EDT

Kronecker-product random matrices and a matrix least squares problem

3:40 - 4:10 PM EDT

Coffee Break

4:10 - 5:00 PM EDT

Solving overparametrized systems of random equations

Wednesday, May 22, 2024

9:00 - 10:00 AM EDT

Recent developments in nonlinear random matrices

10:00 - 10:30 AM EDT

Coffee Break

10:30 - 11:10 AM EDT

Algorithmic Thresholds for Ising Perceptron

11:20 AM - 12:00 PM EDT

Neural Networks and Products of Random Matrices in the Infinite Depth-and-Width Limit

12:10 - 2:00 PM EDT

Lunch/Free Time

2:00 - 2:40 PM EDT

Spectrum of the Neural Tangent Kernel in a quadratic scaling

2:50 - 3:30 PM EDT

The High-Dimensional Asymptotics of Principal Component Regression

3:40 - 4:10 PM EDT

Coffee Break

Confirmed Speakers & Participants

Talks will be presented virtually or in-person as indicated in the schedule below.

- Elie Alhajjar

RAND

- Lucas Benigni

Université de Montréal

- Tatiana Brailovskaya

Princeton University

- Jonathan Chavez Casillas

University of Rhode Island

- Qianyong Chen

UMass Amherst

- Shabarish Chenakkod

University of Michigan

- Hongmei Chi

Florida A&M University

- Alperen Ergur

UTSA

- Zhou Fan

Yale University

- J. Elisenda Grigsby

Boston College

- Yiyun He

University of California, Irvine

- Vishesh Jain

University of Chicago, Illinois

- Sungwoo Jeong

Cornell University

- Han Le

University of Michigan

- Yuqi Lei

Brown University

- Sivan Leviyang

Georgetown University

- Shuangping Li

Stanford University

- Weiyu Li

Harvard University

- Wenjian Liu

City University of New York

- Ji Meng Loh

New Jersey Institute of Technology

- Jackie Lok

Princeton University

- Hengrui Luo

Lawrence Berkeley National Laboratory/ Rice University

- renyuan ma

Yale University

- Benjamin McKenna

Harvard University

- Andrea Montanari

Stanford University

- Raj Nadakuditi

University of Michigan

- Hoi Nguyen

Ohio State University

- Oanh Nguyen

Brown University

- Mihai Nica

University of Guelph

- Petar Nizic-Nikolac

ETH Zurich

- Sean O'Rourke

University of Colorado Boulder

- Aaradhya Pandey

Princeton University

- Jing Qin

University of Kentucky

- Elizaveta Rebrova

Princeton

- Daniel Reichman

Worcester Polytechnic Institute, Worcester, MA

- Elad Romanov

Stanford University

- Julian Sahasrabudhe

University of Cambridge

- Mehtaab Sawhney

MIT

- Calum Shearer

University of Colorado Boulder

- Paul Simanjuntak

Texas A&M University

- Youngtak Sohn

MIT

- Reed Spitzer

Brown University

- JINGYE TAN

Cornell University

- Konstantin Tikhomirov

Carnegie Mellon University

- Linh Tran

Yale University

- Phuc Tran

Yale University

- Alexander Van Werde

Eindhoven University of Technology

- Roman Vershynin

University of California, Irvine

- Van Vu

Yale University

- Haixiao Wang

University of California, San Diego

- Jingheng Wang

Ohio State University

- Ke Wang

Hong Kong University of Science and Technology

- Xiong Wang

Johns Hopkins University

- Zhichao Wang

University of California San Diego

- Lu WEI

Texas Tech University

- Garrett Wen

Yale University

- Haishen Yao

The City University of New York, Queensborough Community College

- Yifan Zhang

University of Texas at Austin

- Ziao Zhang

Brown University

- Yizhe Zhu

University of California Irvine