Loading...

Abstract

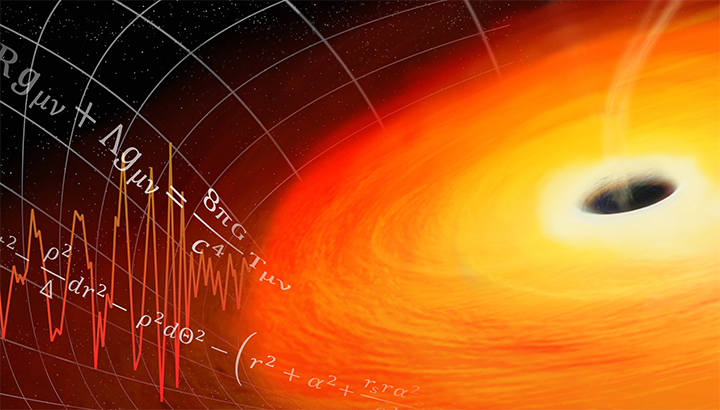

The equations of general relativity, Einstein's field equations, are among the most complicated partial differential equations in mathematical physics. These equations predict the existence of gravitational waves, which are propagating disturbances in spacetime itself. In 2016, the first direct observation of these waves from colliding black holes was reported on, a historic discovery that led to last years Nobel Prize. This discovery would not have been possible without intense interaction between physicists, mathematicians, and high-performance computing tools. Indeed, numerically solving Einstein's equations for the expected wave signal and the processing of gravitational-wave datasets was enabled by advances in algorithms, numerical methods, and access to large computing resources. In this talk, I will focus on the critical role all three played in making this historic discovery as well as summarizing current directions in computational relativity and gravitational-wave data science.

About the Speaker

Adam Tauman Kalai received his BA from Harvard, and MA and PhD under the supervision of Avrim Blum from CMU. After an NSF postdoctoral fellowship at M.I.T. with Santosh Vempala, he served as an assistant professor at the Toyota Technological Institute at Chicago and then at Georgia Tech. He is now a Principal Researcher at Microsoft Research New England. His honors include an NSF CAREER award and an Alfred P. Sloan fellowship. His research focuses on Artificial Intelligence and Machine Learning algorithms.

Adam Tauman Kalai, Microsoft Research New England

Confirmed Speakers & Participants

Talks will be presented virtually or in-person as indicated in the schedule below.

Speaker

Virtual Speaker

Poster Presenter

Attendee

Virtual Attendee

- Scott Field

University of Massachusetts Dartmouth

Lecture Videos

Scott Field

University of Massachusetts Dartmouth

February 20, 2019 • 12:00 AM