Organizing Committee

George Mason University

Union College

University of Alberta

California State University, Channel Islands

Rutgers University

University of Wisconsin

UCLA

Rutgers University

Rutgers University

Middle East Technical University

Wilfrid Laurier University

Abstract

Meanwhile, mathematics and computer science are two of three disciplines with the lowest percentage of women attaining PhDs (28% and 24%, respectively). Creating explicit research bridges between these groups will provide networks of women with similar research interests, and will also create pathways for the female-friendly culture in statistics to make its way into mathematics and computer science. This workshop will generate research collaborations, and highlight mathematics as a primary contributor. Successful applicants will be assigned to a research problem based on their expertise. Each group will aim to include a more senior person in each of statistics, machine learning, and mathematics.

Partially supported by NSF-HRD 1500481 - AWM ADVANCE grant. Additional support for some participant travel will be provided by DIMACS in association with its Special Focus on Information Sharing and Dynamic Data Analysis. Co-sponsored by Brown's Data Science Initiative.

Loading participant list in background...

Projects

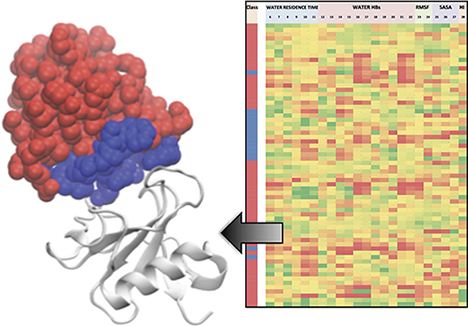

Predictive Models for Molecular Data

Group Leaders TBA

Using data generated in past molecular modeling projects, participants will be encouraged to apply a range of machine learning and informatics techniques to analyze the data and build/optimize predictive models. Some prior models have been built with a hundred or so experimental data points, while other bimolecular models have utilized over 50,000 data points.

Through these exercises, participants will learn the applicability of varied machine learning methods to datasets of different sizes (both the number of data points and the length of feature vectors). In addition, best practices in cross-validation will be discussed, so as to give participants a sense of how to organize their data in a way that is most rigorous when existing relationships among the data points are known. It may be possible to include deep learning methods as well.

Representation of Data as Multi-Scale Features and Measures

Group Leaders TBA

The methods are very general and have been demonstrated on network and sensor data sets. Multi-resolution inference has been proposed by X. Meng as an important new research challenge in statistics. This research collaboration would enable assessment of the applicability of multiscale representation approaches to other types of data (e.g., molecular modeling data used to study obstructive sleep apnea, and possibly a cyber-security related data set). It would also serve the purpose of introducing this approach to statistical researchers who may be interested in statistical fusion, data depth, and confidence measures. In addition, new multi-scale methods for representation of data as measures characterizing mathematical properties of the data (e.g. geometric properties) could be developed and applied.

Inferential Models Founded in Statistical and Topological Learning

Group Leaders TBA

A systematic review and meta-analysis of pediatric OSA literature reveals a link between craniofacial morphology and OSA prevalence in pediatric patients. The presence of this relationship has led to the hypothesis that experienced dentists and orthodontists may be able to identify children at risk of developing OSA simply by observing a child’s craniofacial characteristics.

In this project, we propose a study of real-word pediatric OSA datasets in order to (1) develop a statistical and topological learning (STL) model that can accurately predict OSA severity, and (2) verify whether OSA severity measurements given by orthodontists are comparable to those given by sleep specialists via PSG. To tackle the substantial number of variables inherent in OSA data—including time series data (e.g.: EOG, EMG, and ECG), three dimensional images of the face and upper airway, medical history, dental measurements, various questionnaires, blood and urine samples, and other sleep-disordered breathing risk factors—we propose a review of existing STL methods in order to achieve the above research goals. In particular, we will incorporate techniques from various fields, including time series analysis, shape analysis, persistent homology, zigzag persistence, graphical LASSO, tensor regression, as well as numerous clustering techniques from statistics and machine learning.

Stochastic signal processing for high dimensional data

Deanna Needell (UCLA)

Participants will learn the mathematical background to such acquisition and reconstruction approaches, and we will explore the impact on many applications of interest to modern researchers and practitioners. In particular, we will select several applications of interest to the group and design stochastic algorithms for those frameworks. The participants will run experiments on synthetic data from those applications, and work on theoretical guarantees for the methods.

The Hubness Phenomenon in High Dimensional Spaces

Group Leaders TBA

High dimensional data are ubiquitous, e.g. text, images, and biological data can easily contain tens of thousands of features. Often, though, data have an intrinsic dimensionality that is embedded within the full dimensional space.

In this project we'll investigate the relationship between the hubness phenomenon and the intrinsic dimensionality of data, with the ultimate goal of recovering the subspaces data lie within. We are particularly interested in the scenario where the relevant subspace depends on the location within the input space. The findings of this study may enable effective subspace clustering of data, as well as outlier identification.

Codes for Data Storage with Queues for Data Access

Group Leaders TBA

Large volumes of data, which are being collected for the purpose of knowledge extraction, have to be reliably, efficiently, and securely stored. Retrieval of large data files from storage has to be fast (and often anonymous and private). This project is concerned with big data storage and access, and its relevant mathematical disciplines include algebraic coding and queueing theory. Large-scale cloud data storage and distributed file systems, e.g., Amazon EBS and Google FS, have become the backbone of many applications such as web searching, e-commerce, and cluster computing.

Cloud services are implemented on top of a distributed storage layer that acts as a middleware to the applications, and also provides the desired content to the users, whose interests range from performing data analytics to watching movies. Coding theory has been essential in providing solutions for reliable, efficient, and secure telecommunications, but these solutions are inadequate when storing and moving very large files across networks is necessary. Many new deep problems that arise in such circumstances simultaneously belong to both fundamental coding and queueing theory, but have so far been mostly separately addressed.

Participants of this project will, according to their preferences regarding combinatorics, algebra and probability, learn about and work on some coding and/or queueing problems in the era of big data. The hope is that some would take interest in both of these interwoven and indispensable aspects of big data storage and access. Undergraduates are welcome.