Organizing Committee

Yale University

Yale University

University of Minnesota

University of Texas at Austin

University of Massachusetts Amherst

University of Toronto

University of Bonn

University of Michigan

Abstract

The organizers would like to thank James Bremer, Ronald Coifman, Jingfang Huang, Peter Jones, Mauro Maggioni, Yair Minsky, Vladimir Rokhlin, Wilhelm Schlag, John Schotland, Amit Singer, Stefan Steinerberger, and Mark Tygert for their help.

Loading participant list in background...

Workshop Schedule

Monday, June 26, 2023

8:30 - 8:50 AM EDT

Check In

8:50 - 9:00 AM EDT

Welcome

9:00 - 9:45 AM EDT

Weil-Petersson curves, traveling salesman theorems, and minimal surfaces

10:00 - 10:30 AM EDT

Coffee Break

10:30 - 11:15 AM EDT

New perspectives on inverse problems: stochasticity and Monte Carlo method

11:30 AM - 12:15 PM EDT

Quantum Signal Processing

12:30 - 2:30 PM EDT

Lunch/Free Time

2:30 - 3:15 PM EDT

Nonlocal PDEs and Quantum Optics

3:30 - 4:00 PM EDT

Coffee Break

4:00 - 4:45 PM EDT

Wigner-Smith Methods for Computational Electromagnetics and Acoustics

5:00 - 6:30 PM EDT

Reception

Tuesday, June 27, 2023

9:00 - 9:45 AM EDT

Of Crystals and Corals

10:00 - 10:30 AM EDT

Coffee Break

10:30 - 11:15 AM EDT

On the Connectivity of Chord-arc Curves

11:30 AM - 12:15 PM EDT

Multiscale Diffusion Geometry for Learning Manifolds, Flows and Optimal Transport

12:30 - 2:00 PM EDT

Lunch/Free Time

2:00 - 3:00 PM EDT

Poster Session Blitz

3:30 - 5:30 PM EDT

Poster Session / Coffee Break

4:30 - 4:45 PM EDT

Remarks - Peter Jones pt 1

4:45 - 5:00 PM EDT

Remarks - Peter Jones pt 2

Wednesday, June 28, 2023

9:00 - 9:45 AM EDT

Some old and some newer perspectives on data-driven modeling of complex systems

10:00 - 10:30 AM EDT

Coffee Break

10:30 - 11:15 AM EDT

How will we plan our stakes in deep haystacks? Science in the AI Spring

11:30 AM - 12:15 PM EDT

On the Connection between Deep Neural Networks and Kernel Methods

12:25 - 12:30 PM EDT

Group Photo (Immediately After Talk)

12:30 - 2:30 PM EDT

Open Problem Session Lunch

2:30 - 3:30 PM EDT

Panel Part 1 (Introductions / Presentations)

3:30 - 4:00 PM EDT

Coffee Break

4:00 - 5:00 PM EDT

Panel Part 2

Thursday, June 29, 2023

9:00 - 9:45 AM EDT

Some estimation problems for high-dimensional stochastic dynamical systems with structure

10:00 - 10:30 AM EDT

Coffee Break

10:30 - 11:15 AM EDT

Low distortion embeddings with bottom-up manifold learning

11:30 AM - 12:15 PM EDT

Curvature on Combinatorial Graphs

12:30 - 2:30 PM EDT

Networking Lunch

2:30 - 3:15 PM EDT

Reduced label complexity for tight linear regression

3:30 - 4:15 PM EDT

Randomized algorithms for linear algebraic computations

5:00 - 7:00 PM EDT

Banquet (offsite)

Friday, June 30, 2023

9:00 - 9:45 AM EDT

Project and Forget: Solving Large-Scale Metric Constrained Problems

10:00 - 10:30 AM EDT

Coffee Break

10:30 - 11:15 AM EDT

Randomized tensor-network algorithms for random data in high-dimensions

11:30 AM - 12:15 PM EDT

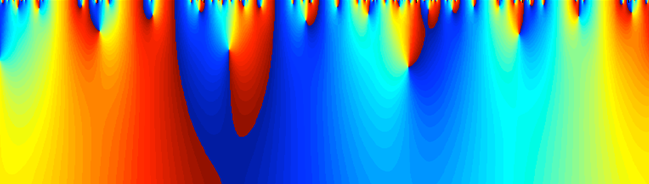

Integral equations and singular waveguides

12:30 - 4:00 PM EDT